Efficiently evaluating LLM applications requires robust tooling to collect and analyze feedback. W&B Weave provides an integrated feedback system, allowing users to provide Call feedback directly through the UI or programmatically through the SDK. Various feedback types are supported, including emoji reactions, textual comments, and structured data, enabling teams to:Documentation Index

Fetch the complete documentation index at: https://wb-21fd5541-john-wbdocs-2044-rename-serverless-products.mintlify.app/llms.txt

Use this file to discover all available pages before exploring further.

- Build evaluation datasets for performance monitoring.

- Identify and resolve LLM content issues effectively.

- Gather examples for advanced tasks like fine-tuning.

Provide feedback in the UI

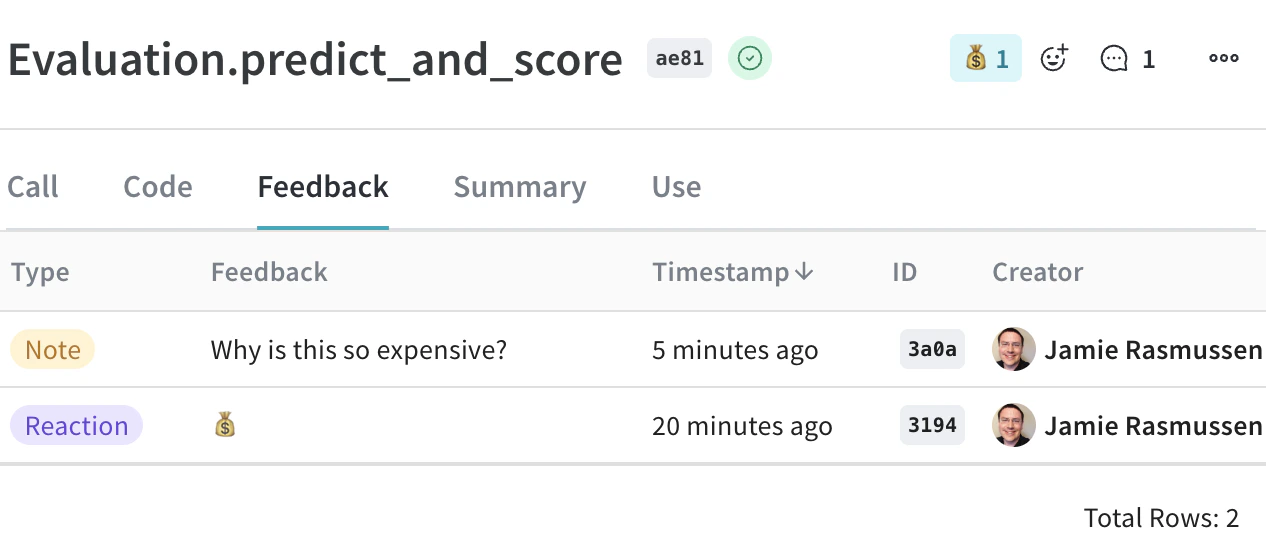

In the Weave UI, you can add and view feedback from the Call details panel or using the icons.From the Call details panel

- In the Weave project sidebar, navigate to Traces.

- Find the row for the Call that you want to add feedback to.

- Click the linked Trace name to open the trace tree and Call details panel.

- In the Call details tab bar, select Feedback.

- Add, view, or delete feedback:

- Add and view feedback using the icons located in the upper right corner of the Call details feedback view.

- View and delete feedback from the Call details feedback table. Delete feedback by clicking the trashcan icon in the rightmost column of the appropriate feedback row.

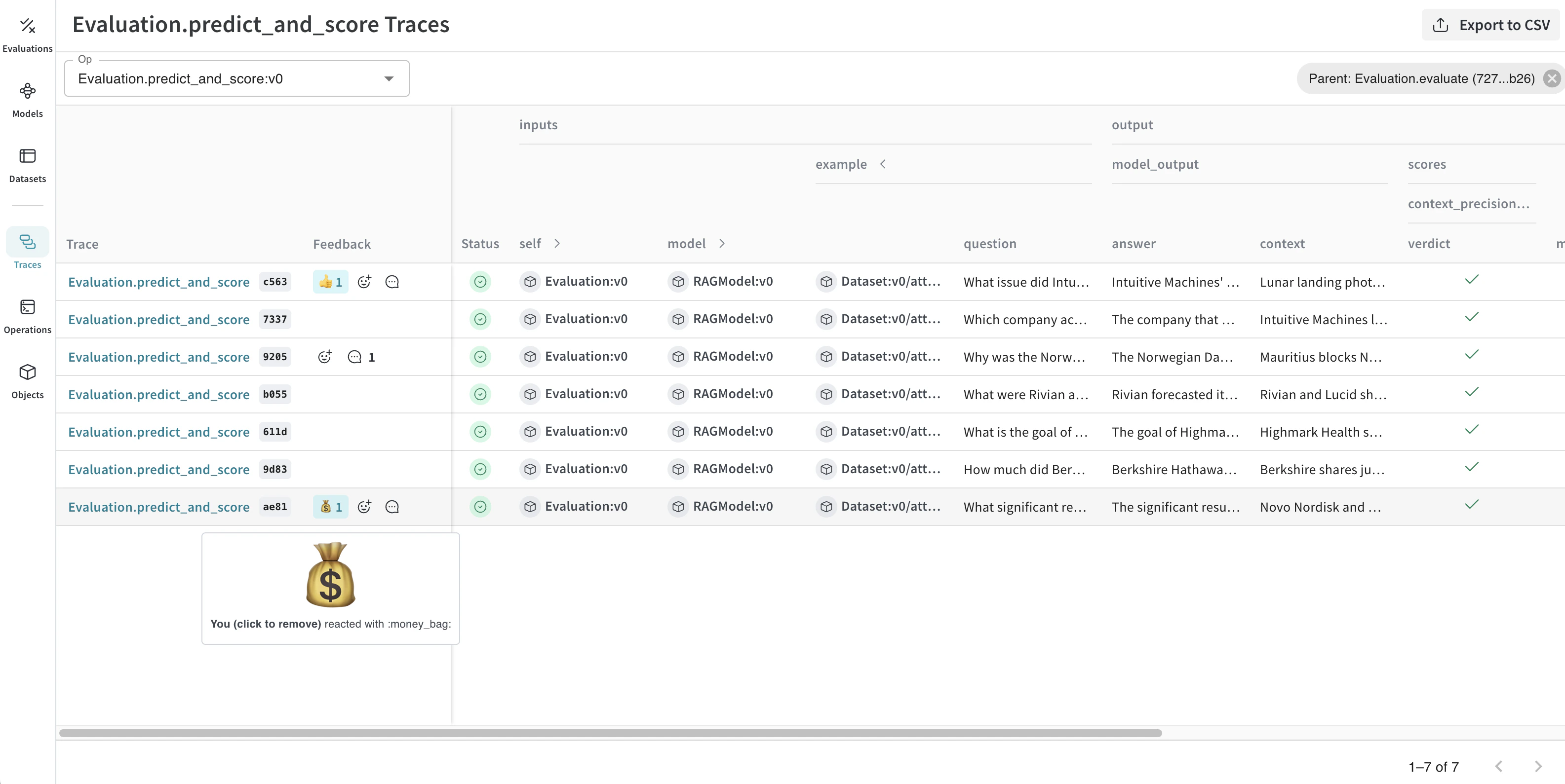

Use the feedback icons

You can add or remove a reaction, and add a note using the icons that are located in both the Traces table and individual Call details panel.- Traces table: Located in Feedback column in the appropriate row in the Traces table.

- Call details panel: Located in the upper right corner of each Call details panel.

- Click the emoji icon.

- Add a thumbs up, thumbs down, or click the + icon for more emojis.

- Hover over the emoji reaction you want to remove.

- Click the reaction to remove it.

You can also delete feedback from the Feedback column on the Call details panel.To add a comment:

- Click the comment bubble icon.

- In the text box, add your note. The maximum number of characters in a feedback note is 1024.

- To save the note, press the Enter key. You can add additional notes.

Provide feedback through the SDK

You can find SDK usage examples for feedback in the UI under the Use tab in the Call details panel. You can use the Weave Python SDK to programmatically add, remove, and query feedback on calls. The TypeScript SDK does not currently support feedback functionality.Query a project’s feedback

You can query the feedback for your Weave project using the SDK. The SDK supports the following feedback query operations:client.get_feedback(): Returns all feedback in a project.client.get_feedback("<feedback_uuid>"): Returns a specific feedback object specified by<feedback_uuid>as a collection.client.get_feedback(reaction="<reaction_type>"): Returns all feedback objects for a specific reaction type.

client.get_feedback():

id: The feedback object ID.created_at: The creation time information for the feedback object.feedback_type: The type of feedback (reaction, note, custom).payload: The feedback payload.

- Python

- TypeScript

Add feedback to a Call

You can add feedback to a Call using the Call’s UUID. To use the UUID to get a particular Call, retrieve it during or after Call execution. The SDK supports the following operations for adding feedback to a Call:call.feedback.add_reaction("<reaction_type>"): Add one of the supported<reaction_types>(emojis), such as 👍.call.feedback.add_note("<note>"): Add a note.call.feedback.add("<label>", <object>): Add a custom feedback<object>specified by<label>.

- Python

- TypeScript

Retrieve the Call UUID

For scenarios where you need to add feedback immediately after a Call, you can retrieve the Call UUID programmatically during or after the Call execution.During Call execution

To retrieve the UUID during Call execution, get the current Call, and return the ID.- Python

- TypeScript

After Call execution

Alternatively, you can usecall() method to execute the operation and retrieve the ID after Call execution:

- Python

- TypeScript

Delete feedback from a Call

You can delete feedback from a particular call by specifying a UUID.- Python

- TypeScript

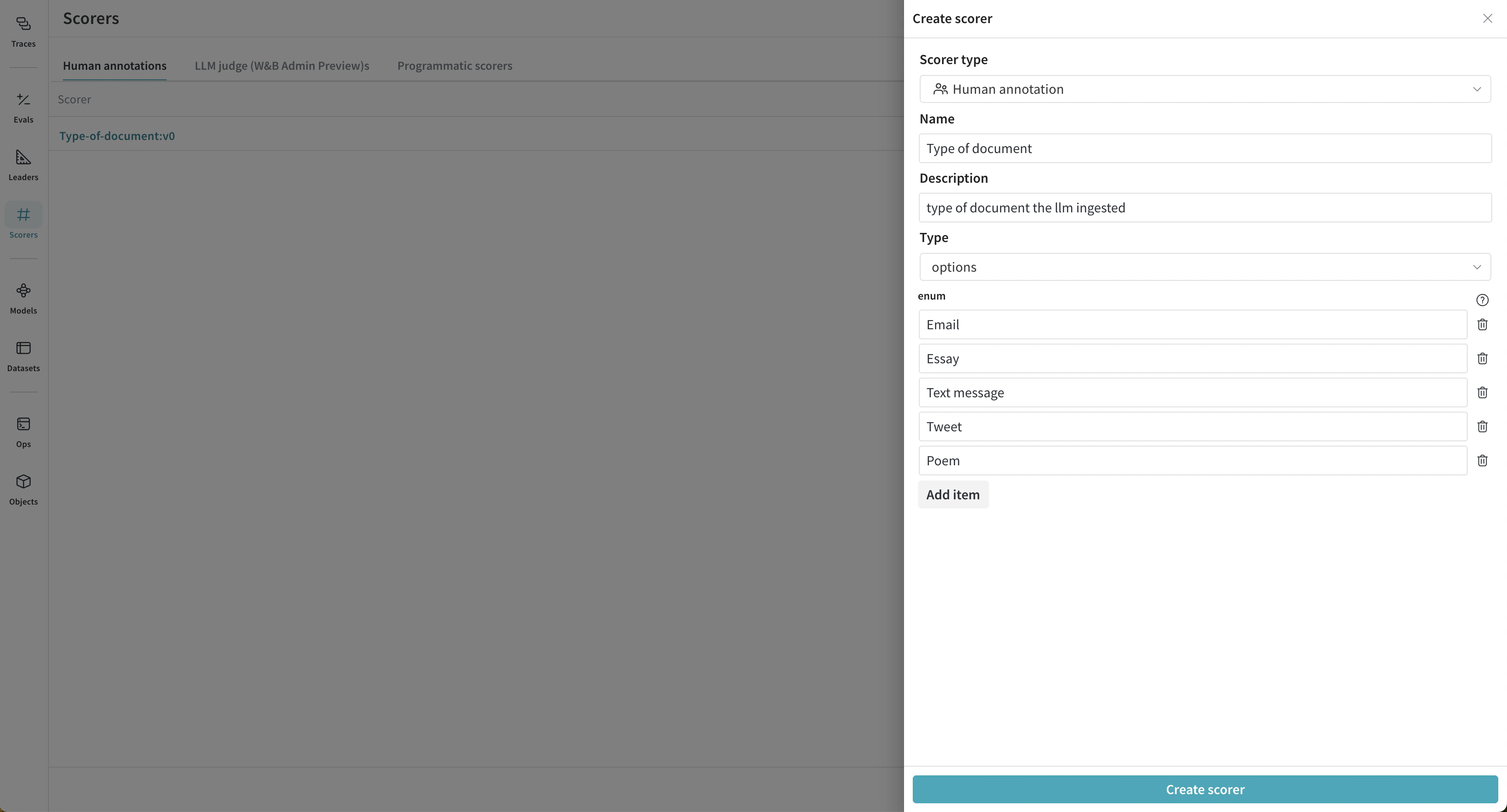

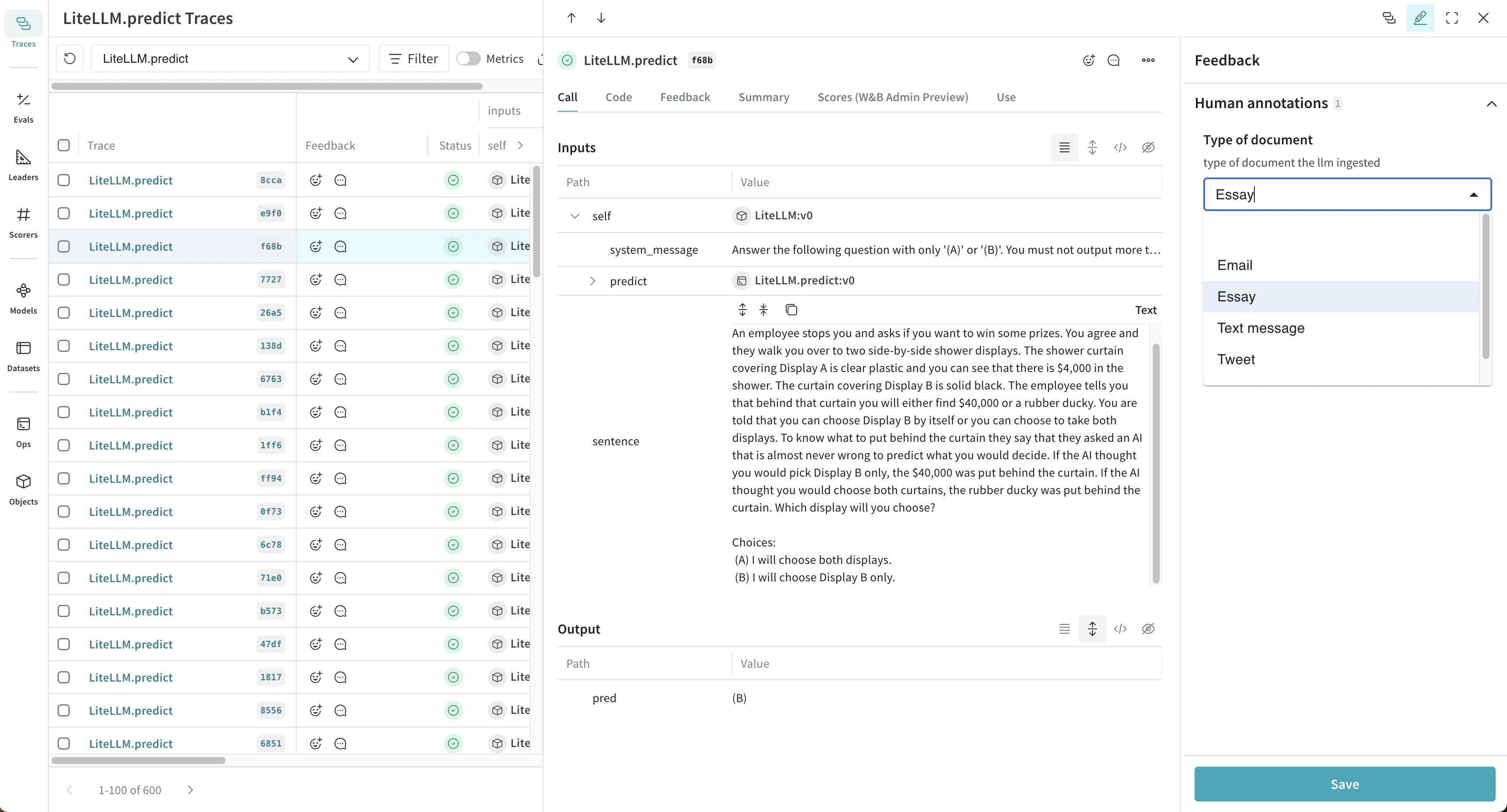

Add human annotations

Human annotations are supported in the Weave UI. This functionality allows you to create custom fields to add additional, human-entered data to your Traces as feedback. To make human annotations, you must first create a Human Annotation scorer using either the UI or the API. Then, you can use the scorer in the UI to make annotations, and modify your annotation scorers using the API.Create a human annotation scorer in the UI

To create a human annotation scorer in the UI, do the following:- In the project sidebar, navigate to Assets.

- In the Assets navigation panel, click Scorers.

- In the Scorers panel header, click New scorer.

- In the Create Scorer modal dialog, set:

Scorer typetoHuman annotationNameDescriptionType, which determines the type of feedback that will be collected, such asbooleanorinteger.

- Click Create scorer. Now, you can use your scorer to make annotations.

Type selected for the score configuration is an enum containing the possible document types.

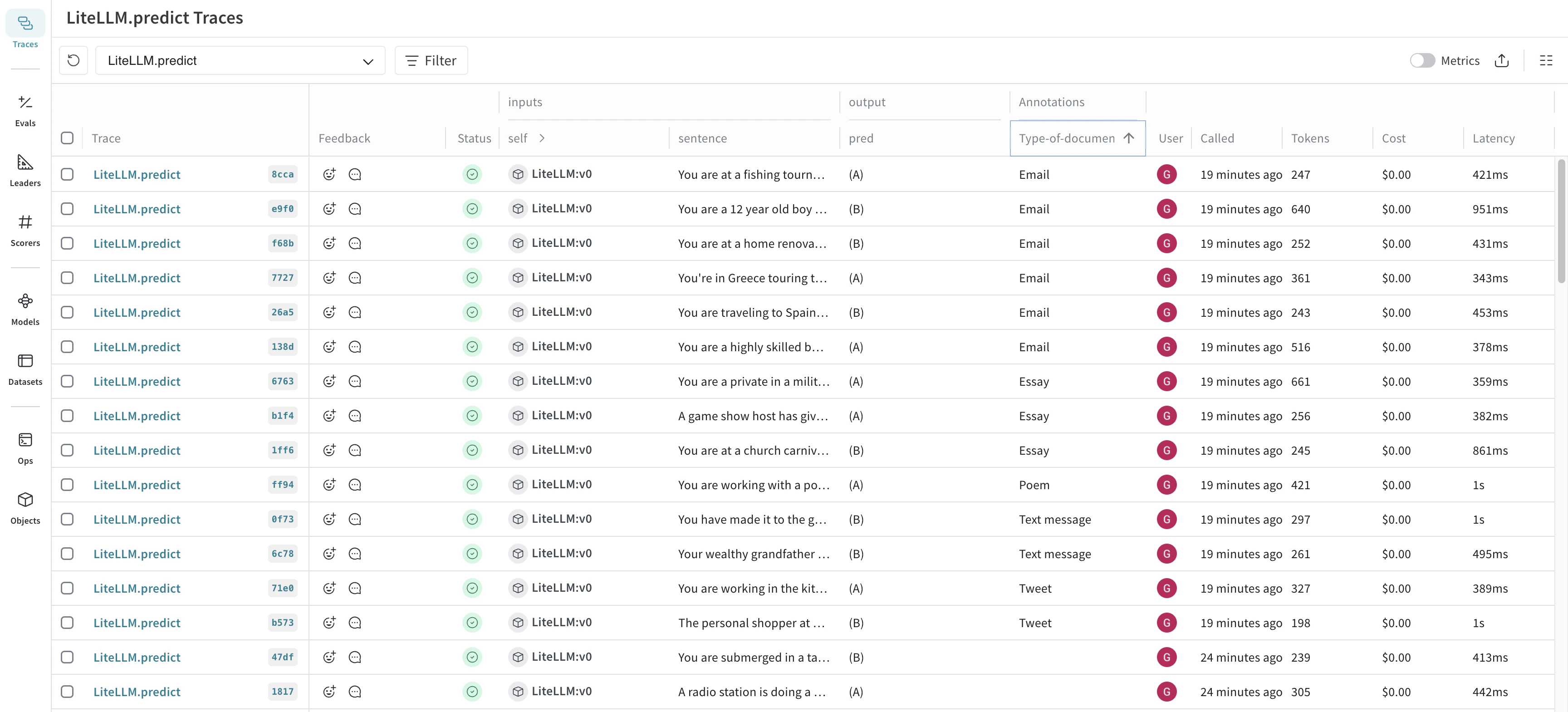

Use the human annotation scorer in the UI

Once you create a human annotation scorer, it becomes available to use on the Traces page. To use the scorer, do the following:- In the project sidebar, navigate to Traces.

- Find the row for the Call that you want to add a human annotation to.

- Click the linked Trace name to open the trace tree and Call details panel.

-

In the upper right corner of the Call details tab bar, click the Show feedback button.

- Make an annotation.

- Click Save.

-

In the Call details panel tab bar, click the Feedback tab to view the Feedback table. The new annotation displays in the table. You can also view the annotations in the Annotations column in the main Traces table.

Refresh the Traces table to view the most up-to-date information.

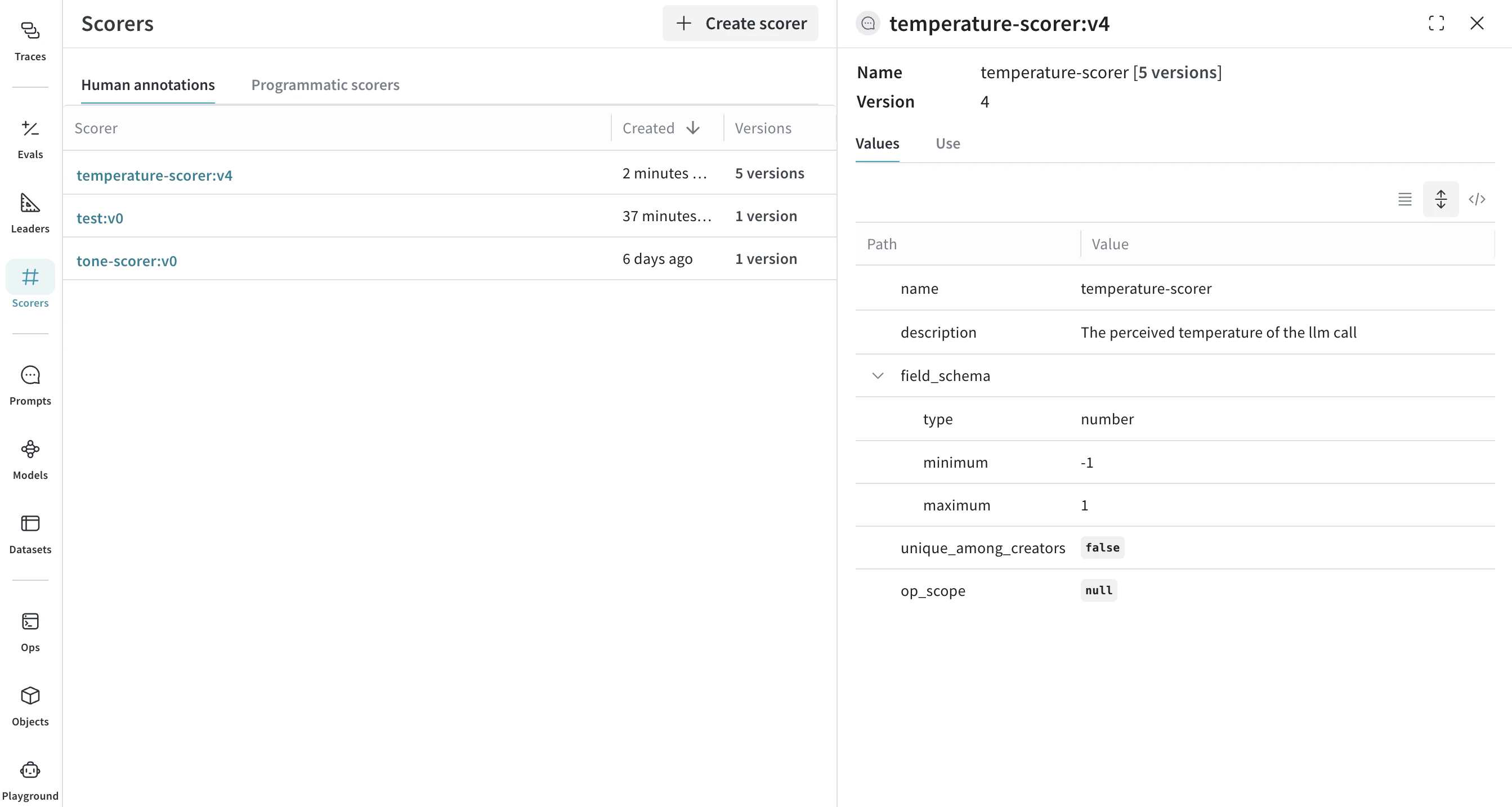

Create a human annotation scorer using the API

You can also create human annotation scorers through the API. Each scorer is its own object, which you create and update independently. To create a human annotation scorer programmatically, do the following:- Import the

AnnotationSpecclass fromweave.flow.annotation_spec. - Use the

publishmethod fromweaveto create the scorer.

Temperature, is used to score the perceived temperature of the LLM call. The second scorer, Tone, is used to score the tone of the LLM response. Each scorer is created using save with an associated object ID (temperature-scorer and tone-scorer).

- Python

- TypeScript

Modify a human annotation scorer using the API

Expanding on creating a human annotation scorer using the API, the following example creates an updated version of theTemperature scorer, by using the original object ID (temperature-scorer) on publish. The result is an updated object, with a history of all versions.

You can view human annotation scorer object history in the Scorers tab under Human annotations.

- Python

- TypeScript

Use a human annotation scorer using the API

The feedback API allows you to use a human annotation scorer by specifying a specially constructed name and anannotation_ref field. You can obtain the annotation_spec_ref from the UI by selecting the appropriate tab, or during the creation of the AnnotationSpec.

- Python